It requires a certain kind of mind to see beauty in a hamburger bun—Ray Kroc

Maccas. Mickey D’s. The golden arches. Whatever you call it, you know I’m talking about none other than fast-food giant McDonald’s. A survey of 7000 people across six countries 20 years ago by Sponsorship Research International found that more people recognized the golden arches symbol (88%) than the Christian cross (54%). With more than 35,000 restaurants in 118 countries and territories around the world, McDonald’s has come a long way since multi-mixer salesman Ray Kroc happened upon a small fast-food restaurant in 1954.

For an organization of this size and reach, consistency and strong branding are certainly key ingredients in its marketing mix. McDonald’s restaurants all over the world are easily recognised and while the menu does differ slightly between countries, users know what kind of experience to expect. With this in mind, I wondered if the same is true for McDonald’s web presence? How successful is a large organization like McDonald’s at delivering a consistent online user experience tailored to suit diverse audiences worldwide without losing its core meaning? I decided to investigate and gave McDonald’s a good grilling by testing ten of its country-specific websites’ home pages in one Chalkmark study.

Preparation time 🥒

First-click testing reveals the first impressions your users have of your designs. This information is useful in determining whether users are heading down the right path when they first arrive at your site. When considering the best way to measure and compare ten of McDonald’s websites from around the world, I choose first-click testing because I wanted to be able to test the visual designs of each website and I wanted to do it all in one research study.My first job in the setup process was to decide which McDonald’s websites would make the cut.

The approach was to divide the planet up by continent, combined with the requirement that the sites selected be available in my native language (English) in order to interpret the results. I chose: Australia, Canada, Fiji, India, Malaysia, New Zealand, Singapore, South Africa, the UK, and the US. The next task was to figure out how to test this. Ten tasks is ideal for a Chalkmark study, so I made it one task per website; however, determining what those tasks would be was tricky. Serving up the same task for all ten ran the risk of participants tiring of the repetition, but a level of consistency was necessary in order to compare the sites. I decided that all tasks would be different, but tied together with one common theme: burgers.

After all, you don’t win friends with salad.

Launching and sourcing participants 👱🏻👩👩🏻👧👧🏾

When sourcing participants for my research, I often hand the recruitment responsibilities over to Optimal Workshop because it’s super quick and easy; however, this time I decided to do something a bit different. Because McDonald’s is such a large and well-known organization visited by hundreds of millions of people every year, I decided to recruit entirely via Twitter by simply tweeting the link out. Am I three fries short of a happy meal for thinking this would work? Apparently not. In just under a week I had the 30+ completed responses needed to peel back the wrapper on McDonald’s.

Imagine what could have happened if it had been McDonald’s tweeting that out to the burger-loving masses? Ideally when recruiting for a first-click testing study the more participants you can get the more sure you can be of your results, but aiming for 30-50 completed responses will still provide viable results. Conducting user research doesn’t have to be expensive; you can achieve quality results that cut the mustard for free. It’s a great way to connect with your customers, and you could easily reward participants with, say, a burger voucher by redirecting them somewhere after they do the activity—ooh, there’s an idea!

Reading the results menu 🍽️

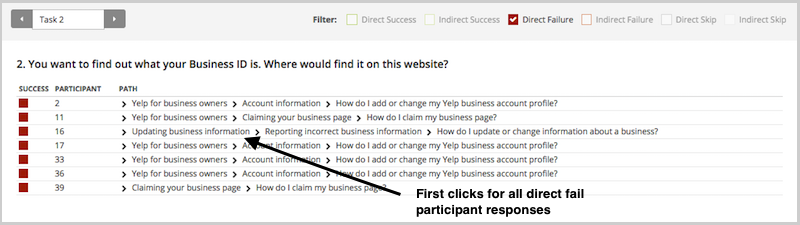

Interpreting the results from a Chalkmark study is quick and easy.

Everything you need presented under a series of tabs under ‘Analysis’ in the results section of the dashboard:

- Participants: this tab allows you to review details about every participant that started your Chalkmark study and also contains handy filtering options for including, excluding and segmenting.

- Questionnaire: if you included any pre or post study questionnaires, you will find the results here.

- Task Results: this tab provides a detailed statistical overview of each task in your study based on the correct areas as defined by you during setup. This functionality allows you to structure your results and speeds up your analysis time because everything you need to know about each task is contained in one diagram. Chalkmark also allows you to edit and define the correct areas retrospectively, so if you forget or make a mistake you can always fix it.

- Clickmaps: under this tab you will find three different types of visual clickmaps for each task showing you exactly where your participants clicked: heatmaps, grid and selection. Heatmaps show the hotspots of where participants clicked and can be switched to a greyscale view for greater readability and grid maps show a larger block of colour over the sections that were clicked and includes the option to show the individual clicks. The selection map just shows the individual clicks represented by black dots.

What the deep fryer gave me 🍟🎁

McDonald’s tested ridiculously well right across the board in the Chalkmark study. Country by country in alphabetical order, here’s what I discovered:

- Australia: 91% of participants successfully identified where to go to view the different types of chicken burgers

- Canada: all participants in this study correctly identified the first click needed to locate the nutritional information of a cheeseburger

- Fiji: 63% of participants were able to correctly locate information on where McDonald’s sources their beef

- India (West and South India site): Were this the real thing, 88% of participants in this study would have been able to order food for home delivery from the very first click, including the 16% who understood that the menu item ‘Convenience’ connected them to this service

- Malaysia: 94% of participants were able to find out how many beef patties are on a Big Mac

- New Zealand: 91% of participants in this study were able to locate information on the Almighty Angus™ burger from the first click

- Singapore: 66% of participants were able to correctly identify the first click needed to locate the reduced-calorie dinner menu

- South Africa: 94% of participants had no trouble locating the first click that would enable them to learn how burgers are prepared

- UK: 63% of participants in this study correctly identified the first click for locating the Saver Menu

- US: 75% of participants were able to find out if burger buns contain the same chemicals used to make yoga mats based on where their first clicks landed

Three reasons why McDonald’s nailed it 🍔 🚀

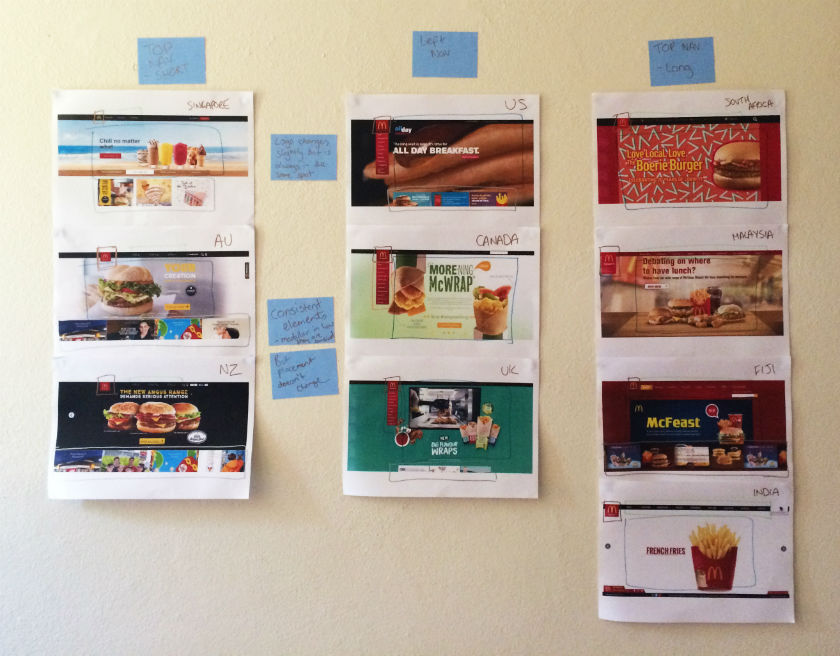

This study clearly shows that McDonald’s are kicking serious goals in the online stakes but before we call it quits and go home, let’s look at why that may be the case. Approaching this the way any UXer worth their salt on their fries would, I stuck all the screens together on a wall, broke out the Sharpies and the Tesla Amazing magnetic notes (the best invention since Post-it notes), and embarked on the hunt for patterns and similarities—and wow did I find them!

Navigation pattern use

Across the ten websites, I observed just two distinct navigation patterns: navigation menus at the top and to the left. The sites with a top navigation menu could also be broken down into two further groups: those with three labels (Australia, New Zealand, and Singapore) and those with more than three labels (Fiji, India, Malaysia, and South Africa). Australia and New Zealand shared the exact same labelling of ‘eat’, ‘learn’, and ‘play’ (despite being distinct countries), whereas the others had their own unique labels but with some subject matter crossover; for example, ‘People’ versus ‘Our People’.

Canada, the UK, and the US all had the same look and feel with their left side navigation bar, but each with different labels. All three still had navigation elements at the top of the page, but the main content that the other seven countries had in their top navigation bars was located in that left sidebar.

These patterns ensure that each site is tailored to its unique audience while still maintaining some consistency so that it’s clear they belong to the same entity.

Logo lovin’ it

If there’s one aspect that screams McDonald’s, it’s the iconic golden arches on the logo. Across the ten sites, the logo does vary slightly in size, color, and composition, but it’s always in the same place and the golden arches are always there. Logo consistently is a no-brainer, and in this case McDonald’s clearly recognizes the strengths of its logo and understands which pieces it can add or remove without losing its identity.

Subtle consistencies in the page layouts

Navigation and logo placement weren’t the only connections one can draw from looking at my wall of McDonald’s. There were also some very interesting but subtle similarities in the page layouts. The middle of the page is always used for images and advertising content, including videos and animated GIFs. The US version featured a particularly memorable advertisement for its all-day breakfast menu, complete with animated maple syrup slowly drizzling its way over a stack of hotcakes.

The bottom of the page is consistently used on most sites to house more advertising content in the form of tiles. The sites without the tiles left this space blank.

Familiarity breeds … usability?

Looking at these results, it is quite clear that the same level of consistency and recognition between McDonald’s restaurants is also present between the different country websites. This did make me wonder what role does familiarity play in determining usability? In investigating I found a few interesting articles on the subject. This article by Colleen Roller on UXmatters discusses the connection between cognitive fluency and familiarity, and the impact this has on decision-making. Colleen writes:Because familiarity enables easy mental processing, it feels fluent. So people often equate the feeling of fluency with familiarity. That is, people often infer familiarity when a stimulus feels easy to process. If we’re familiar with an item, we don’t have to think too hard about it and this reduction in performance load can make it feel easier to use. I also found this fascinating read on Smashing Magazine by Charles Hannon that explores why Apple were able to claim ‘You already know how to use it’ when launching the iPad. It’s well worth a look!Oh and about those yoga mats … the answer is yes.