We love getting stuck into scary, hairy problems to make things better here at Trade Me. One challenge for us in particular is how best to navigate customer reaction to any change we make to the site, the app, the terms and conditions, and so on. Our customers are passionate both about the service we provide — an online auction and marketplace — and its place in their lives, and are rightly forthcoming when they're displeased or frustrated. We therefore rely on our Customer Service (CS) team to give customers a voice, and to respond with patience and skill to customer problems ranging from incorrectly listed items to reports of abusive behavior.

The CS team uses a Customer Relationship Management (CRM) system, Trade Me Admin, to monitor support requests and manage customer accounts. As the spectrum of Trade Me's services and the complexity of the public website have grown rapidly, the CRM system has, to be blunt, been updated in ways which have not always been the prettiest. Links for new tools and reports have simply been added to existing pages, and old tools for services we no longer operate have not always been removed. Thus, our latest focus has been to improve the user experience of the CRM system for our CS team.

And though on the surface it looks like we're working on a product with only 90 internal users, our changes will have flow on effects to tens of thousands of our members at any given time (from a total number of around 3.6 million members).

The challenges of designing customer service systems

We face unique challenges designing customer service systems. Robert Schumacher from GfK summarizes these problems well. I’ve paraphrased him here and added an issue of my own:

1. Customer service centres are high volume environments — Our CS team has thousands of customer interactions every day, and and each team member travels similar paths in the CRM system.

2. Wrong turns are amplified — With so many similar interactions, a system change that adds a minute more to processing customer queries could slow down the whole team and result in delays for customers.

3. Two people relying on the same system — When the CS team takes a phone call from a customer, the CRM system is serving both people: the CS person who is interacting with it, and the caller who directs the interaction. Trouble is, the caller can't see the paths the system is forcing the CS person to take. For example, in a previous job a client’s CS team would always ask callers two or three extra security questions — not to confirm identites, but to cover up the delay between answering the call and the right page loading in the system.

4. Desktop clutter — As a result of the plethora of tools and reports and systems, the desktop of the average CS team member is crowded with open windows and tabs. They have to remember where things are and also how to interact with the different tools and reports, all of which may have been created independently (ie. work differently). This presents quite the cognitive load.

5. CS team members are expert users — They use the system every day, and will all have their own techniques for interacting with it quickly and accurately. They've also probably come up with their own solutions to system problems, which they might be very comfortable with. As Schumacher says, 'A critical mistake is to discount the expert and design for the novice. In contact centers, novices become experts very quickly.'

6. Co-design is risky — Co-design workshops, where the users become the designers, are all the rage, and are usually pretty effective at getting great ideas quickly into systems. But expert users almost always end up regurgitating the system they're familiar with, as they've been trained by repeated use of systems to think in fixed ways.

7. Training is expensive — Complex systems require more training so if your call centre has high churn (ours doesn’t – most staff stick around for years) then you’ll be spending a lot of money. …and the one I’ve added:

8. Powerful does not mean easy to learn — The ‘it must be easy to use and intuitive’ design rationale is often the cause of badly designed CRM systems. Designers mistakenly design something simple when they should be designing something powerful. Powerful is complicated, dense, and often less easy to learn, but once mastered lets staff really motor.

Our project focus

Our improvement of Trade Me Admin is focused on fixing the shattered IA and restructuring the key pages to make them perform even better, bringing them into a new code framework. We're not redesigning the reports, tools, code or even the interaction for most of the reports, as this will be many years of effort. Watching our own staff use Trade Me Admin is like watching someone juggling six or seven things.

The system requires them to visit multiple pages, hold multiple facts in their head, pattern and problem-match across those pages, and follow their professional intuition to get to the heart of a problem. Where the system works well is on some key, densely detailed hub pages. Where it works badly, staff have to navigate click farms with arbitrary link names, have to type across the URL to get to hidden reports, and generally expend more effort on finding the answer than on comprehending the answer.

Groundwork

The first thing that we did was to sit with CS and watch them work and get to know the common actions they perform. The random nature of the IA and the plethora of dead links and superseded reports became apparent. We surveyed teams, providing them with screen printouts and three highlighter pens to colour things as green (use heaps), orange (use sometimes) and red (never use). From this, we were able to immediately remove a lot of noise from the new IA. We also saw that specific teams used certain links but that everyone used a core set. Initially focussing on the core set, we set about understanding the tasks under those links.

The complexity of the job soon became apparent – with a complex system like Trade Me Admin, it is possible to do the same thing in many different ways. Most CRM systems are complex and detailed enough for there to be more than one way to achieve the same end and often, it’s not possible to get a definitive answer, only possible to ‘build a picture’. There’s no one-to-one mapping of task to link. Links were also often arbitrarily named: ‘SQL Lookup’ being an example. The highly-trained user base are dependent on muscle memory in finding these links. This meant that when asked something like: “What and where is the policing enquiry function?”, many couldn’t tell us what or where it was, but when they needed the report it contained they found it straight away.

Sort of difficult

Therefore, it came as little surprise that staff found the subsequent card sort task quite hard. We renamed the links to better describe their associated actions, and of course, they weren't in the same location as in Trade Me Admin. So instead of taking the predicted 20 minutes, the sort was taking upwards of 40 minutes. Not great when staff are supposed to be answering customer enquiries!

We noticed some strong trends in the results, with links clustering around some of the key pages and tasks (like 'member', 'listing', 'review member financials', and so on). The results also confirmed something that we had observed — that there is a strong split between two types of information: emails/tickets/notes and member info/listing info/reports.

We built and tested two IAs

After card sorting, we created two new IAs, and then customized one of the IAs for each of the three CS teams, giving us IAs to test. Each team was then asked to complete two tree tests, with 50% doing one first and 50% doing the other first. At first glance, the results of the tree test were okay — around 61% — but 'Could try harder'. We saw very little overall difference between the success of the two structures, but definitely some differences in task success. And we also came across an interesting quirk in the results.

Closer analysis of the pie charts with an expert in Trade Me Admin showed that some ‘wrong’ answers would give part of the picture required. In some cases so much so that I reclassified answers as ‘correct’ as they were more right than wrong. Typically, in a real world situation, staff might check several reports in order to build a picture. This ambiguous nature is hard to replicate in a tree test which wants definitive yes or no answers. Keeping the tasks both simple to follow and comprehensive proved harder than we expected.

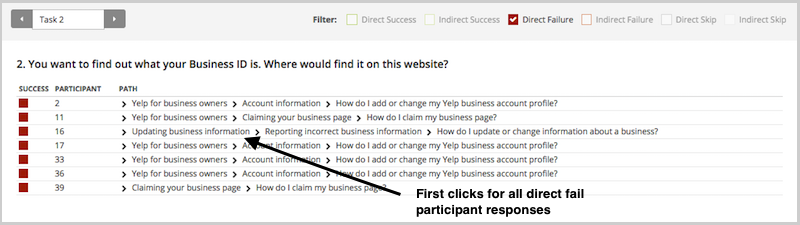

For example, we set a task that asked participants to investigate whether two customers had been bidding on each other's auctions. When we looked at the pietree (see screenshot below), we noticed some participants had clicked on 'Search Members', thinking they needed to locate the customer accounts, when the task had presumed that the customers had already been found. This is a useful insight into writing more comprehensive tasks that we can take with us into our next tests.

What’s clear from analysis is that although it’s possible to provide definitive answers for a typical site’s IAs, for a CRM like Trade Me Admin this is a lot harder. Devising and testing the structure of a CRM has proved a challenge for our highly trained audience, who are used to the current system and naturally find it difficult to see and do things differently. Once we had reclassified some of the answers as ‘correct’ one of the two trees was a clear winner — it had gone from 61% to 69%. The other tree had only improved slightly, from 61% to 63%.

There were still elements with it that were performing sub-optimally in our winning structure, though. Generally, the problems were to do with labelling, where, in some cases, we had attempted to disambiguate those ‘SQL lookup’-type labels but in the process, confused the team. We were left with the dilemma of whether to go with the new labels and make the system initially harder to use for staff but easier to learn for new staff, or stick with the old labels, which are harder to learn. My view is that any new system is going to see an initial performance dip, so we might as well change the labels now and make it better.

The importance of carefully structuring questions in a tree test has been highlighted, particularly in light of the ‘start anywhere/go anywhere’ nature of a CRM. The diffuse but powerful nature of a CRM means that careful consideration of tree test answer options needs to be made, in order to decide ‘how close to 100% correct answer’ you want to get.

Development work has begun so watch this space

It's great to see that our research is influencing the next stage of the CRM system, and we're looking forward to seeing it go live. Of course, our work isn't over— and nor would we want it to be! Alongside the redevelopment of the IA, I've been redesigning the key pages from Trade Me Admin, and continuing to conduct user research, including first click testing using Chalkmark.

This project has been governed by a steadily developing set of design principles, focused on complex CRM systems and the specific needs of their audience. Two of these principles are to reduce navigation and to design for experts, not novices, which means creating dense, detailed pages. It's intense, complex, and rewarding design work, and we'll be exploring this exciting space in more depth in upcoming posts.