At Optimal Workshop, we're dedicated to building the best user research platform to empower you with the tools to better understand your customers and create intuitive digital experiences. We're thrilled to announce some game-changing updates and new products that are on the horizon to help elevate the way you gather insights and keep customers at the heart of everything you do.

What’s new…

Integration with Figma 🚀

Last month, we joined forces with design powerhouse Figma to launch our integration. You can import images from Figma into Chalkmark (our click-testing tool) in just a few clicks, streamlining your workflows and getting insights to make decisions based on data not hunches and opinions.

What’s coming next…

Session Replays 🧑💻

With session replay you can focus on other tasks while Optimal Workshop automatically captures card sort sessions for you to watch in your own time. Gain valuable insights into how participants engage and interpret a card sort without the hassle of running moderated sessions. The first iteration of session replays captures the study interactions, and will not include audio or face recording, but this is something we are exploring for future iterations. Session replays will be available in tree testing and click-testing later in 2024.

Reframer Transcripts 🔍

Say goodbye to juggling note-taking and hello to more efficient ways of working with Transcripts! We're continuing to add more capability to Reframer, our qualitative research tool, to now include the importing of interview transcripts. Save time, reduce human errors and oversights by importing transcripts, tagging and analyzing observations all within Reframer. We’re committed to build on transcripts with video and audio transcription capability in the future, we’ll keep you in the loop and when to expect those releases.

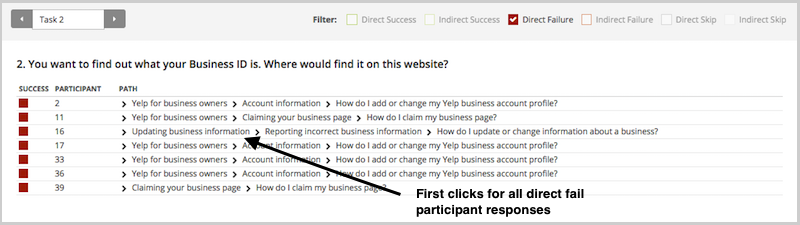

Prototype testing 🧪

The team is fizzing to be working on a new Prototype testing product designed to expand your research methods and help test prototypes easily from the Optimal Workshop platform. Testing prototypes early and often is an important step in the design process, saving you time and money before you invest too heavily in the build. We are working with customers and on delivering the first iteration of this exciting new product. Stay tuned for Prototypes coming in the second quarter of 2024.

Workspaces 🎉

Making Optimal Workshop easier for large organizations to manage teams and collaborate more effectively on projects is a big focus for 2024. Workspaces are the first step towards empowering organizations to better manage multiple teams with projects. Projects will allow greater flexibility on who can see what, encouraging working in the open and collaboration alongside the ability to make projects private. The privacy feature is available on Enterprise plans.

Questions upgrade❓

Our survey product Questions is in for a glow up in 2024 💅. The team are enjoying working with customers, collecting and reviewing feedback on how to improve Questions and will be sharing more on this in the coming months.

Help us build a better Optimal Workshop

We are looking for new customers to join our research panel to help influence product development. From time to time, you’ll be invited to join us for interviews or surveys, and you’ll be rewarded for your time with a thank-you gift. If you’d like to join the team, email product@optimalworkshop.com