You’ve just finished running your card sort. The study has closed and the data is waiting to be analyzed. It’s time to take a look at the analysis side of card sorting, specifically in our tool OptimalSort. Let’s get started.

A note on analysis 📌

When it comes to analysis, there are essentially two types. There’s exploratory analysis (when you look through data to get impressions, pull out useful ideas and be creative) and statistical analysis (which really just comes down to the numbers). These two types of analysis also go by qualitative and quantitative, respectively.

You’re able to get fantastic insights from both forms.

“Remember that you are the one who is doing the thinking, not the technique… you are the one who puts it all together into a great solution. Follow your instincts, take some risks, and try new approaches.” Donna Spencer, Maadmob.

Getting started with analysis 🏁

Whenever you wrap up a study using our card sorting tool, you’ll want to kick off your analysis by heading to the Results Overview section. It’s here that you’ll be able to see how many people actually took part in the study, the average time taken and general statistics about the study itself.

This is useful data to include in presentations to interested stakeholders, just to give them a more holistic view of your research.

Digging into your participant data ⛏

With the Results Overview section out of the way, you can make your way over to the Participants Table. This is where you can find information about the individual people who took part in your card sort. You can also start to filter your data here.

Here are just a few of the different actions that you can take:

- Review your participants, and include or exclude certain individuals based on their card sorts. This is a useful tool if you want to use your data in different ways.

- Segment and reload your results. This function can allow you to view data from individuals or groups of your choosing.

- Add additional card sorts. If you also decided to run manual (in-person) card sorts using printed cards, you can add this data here.

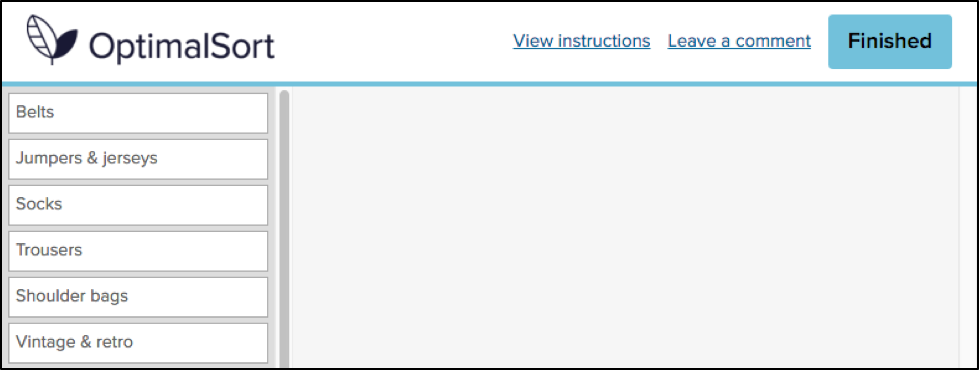

Analysing open and hybrid card sort data 🕵️♂

The Categories tab is the best place to go for open and hybrid card sort results. Take some time to scan the categories people came up with and you’ll be able to quickly build up a good understanding of their ‘mental models’, or how they perceived the theme of your cards.

Consider how different the categories might look for cards containing food items, for example. Some participants might create categories reflecting supermarket aisles, while others might create categories reflecting food groups.

A good place to get started here is by refining your data. Standardize any categories that have similar labels (whether that’s wording, spelling or capitalizations etc). Hybrid card sorts have some set categories, and these will already be standardized.

Note: Before you start throwing categories with similar labels together, take a closer look to see if people had the same conceptual approach. Here’s an example from our card sorting 101 guide:

Of the 15 groups with the word ‘Animal’ in the label, 13 had a similar set of cards, but two participants had labeled their categories slightly differently (Animals and Environment’ and ‘Animals and Nature’) and had thus included extra cards the others didn’t have (‘Glaciers melting faster than previously thought’, for example).

Reviewing the Similarity Matrix 🤔

One really useful tool for understanding how your participants think is the Similarity Matrix. This view shows you the percentage of people who grouped 2 cards together.

The most closely related pairings are clustered along the right edge. Higher agreement between participants on which cards go together equates to darker and larger clusters.

There are a few different ways to use the insights from the Similarity Matrix:

- Put together a draft website structure based on the clusters you see on the right.

- Identify which card pairings are most common (and as a result should probably go together on your website).

- Identify which card pairings are least common so you don’t need to waste time considering how they might work on your website.

Spotting popular card groupings 🔍

Dendrograms are a tool to enable you to spot popular groups of cards, as well to get a general feel of how similar or different your participants’ card sorts were to each other.

There are two dendrograms to explore:

- More than 30 card sort participants: The Actual Agreement Method (AAM) dendrogram gives you the data straight: “X% of participants agree with this exact grouping”.

- Fewer than 30 card sort participants: The Best Merge Method (BMM) tells you “X% of participants agree with parts of this grouping”, and so enables you to extract as much as you can from the data.

Looking for alternative approaches 👀

The Participant-Centric Analysis (PCA) view can be useful when you have a lot of results. It’s quite simple. Basically, it aims to find the most popular grouping strategy, and then find two more popular alternatives among participants who agreed with the first strategy.

This approach is called Participant-Centric Analysis because every response (from every participant) is treated as a potential solution, and then ranked for similarity with other responses. What this is telling you is that if you see a card sort with a 11/43 agreement score, this means 10 other participants sorted their cards into groups similar to these ones.

Taking the next step: Run a card sort and try analysis for yourself 🃏

Now that we’ve taken a bit of a deep dive into the analysis side of card sorting in OptimalSort, it’s time to take the tool for a spin and start generating your own data.

Getting started is easy. If you haven’t already, simply sign up for a free account (you don’t need a credit card) and start a card sort. You can also practice by creating a card sort and sending it out to your coworkers, friends or family. Once you start to see results trickling in, you can start to make sense of the data.

For more information, check out the card sorting 101 guide that we’ve put together, or our introduction to card sorting on the Optimal Workshop Blog.

Happy testing!