Recently, everyone in the design industry has been talking about design sprints. So, naturally, the team at Optimal Workshop wanted to see what all the fuss was about. I picked up a copy of The Sprint Book and suggested to the team that we try out the technique.

In order to keep momentum, we identified a current problem and decided to run the sprint only two weeks later. The short notice was a bit of a challenge, but in the end we made it work. Here’s a run down of how things went, what worked, what didn’t, and lessons learned.

A sprint is an intensive focused period of time to get a product or feature designed and tested with the goal of knowing whether or not the team should keep investing in the development of the idea. The idea needs to be either validated or not validated by the end of the sprint. In turn, this saves time and resource further down the track by being able to pivot early if the idea doesn’t float.

If you’re following The Sprint Book you might have a structured 5 day plan that looks likes this:

- Day 1 - Understand: Discover the business opportunity, the audience, the competition, the value proposition and define metrics of success.

- Day 2 - Diverge: Explore, develop and iterate creative ways of solving the problem, regardless of feasibility.

- Day 3 - Converge: Identify ideas that fit the next product cycle and explore them in further detail through storyboarding.

- Day 4 - Prototype: Design and prepare prototype(s) that can be tested with people.

- Day 5 - Test: User testing with the product's primary target audience.

When you’re running a design sprint, it’s important that you have the right people in the room. It’s all about focus and working fast; you need the right people around in order to do this and not have any blocks down the path. Team, stakeholder and expert buy-in is key — this is not a task just for a design team!After getting buy in and picking out the people who should be involved (developers, designers, product owner, customer success rep, marketing rep, user researcher), these were my next steps:

Pre-sprint

- Read the book

- Panic

- Send out invites

- Write the agenda

- Book a meeting room

- Organize food and coffee

- Get supplies (Post-its, paper, Sharpies, laptops, chargers, cameras)

The sprint

Due to scheduling issues we had to split the sprint over the end of the week and weekend. Sprint guidelines suggest you hold it over Monday to Friday — this is a nice block of time but we had to do Thursday to Thursday, with the weekend off in between, which in turn worked really well. We are all self confessed introverts and, to be honest, the thought of spending five solid days workshopping was daunting. At about two days in, we were exhausted and went away for the weekend and came back on Monday feeling sociable and recharged again and ready to examine the work we’d done in the first two days with fresh eyes.

Design sprint activities

During our sprint we completed a range of different activities but here’s a list of some that worked well for us. You can find out more information about how to run most of these over at The Sprint Book website or checkout some great resources over at Design Sprint Kit.

Lightning talks

We kicked off our sprint by having each person give a quick 5-minute talk on one of these topics in the list below. This gave us all an overview of the whole project and since we each had to present, we in turn became the expert in that area and engaged with the topic (rather than just listening to one person deliver all the information).

Our lightning talk topics included:

- Product history - where have we come from so the whole group has an understanding of who we are and why we’ve made the things we’ve made.

- Vision and business goals - (from the product owner or CEO) a look ahead not just of the tools we provide but where we want the business to go in the future.

- User feedback - what have users been saying so far about the idea we’ve chosen for our sprint. This information is collected by our User Research and Customer Success teams.

- Technical review - an overview of our tech and anything we should be aware of (or a look at possible available tech). This is a good chance to get an engineering lead in to share technical opportunities.

- Comparative research - what else is out there, how have other teams or products addressed this problem space?

Empathy exercise

I asked the sprinters to participate in an exercise so that we could gain empathy for those who are using our tools. The task was to pretend we were one of our customers who had to present a dendrogram to some of our team members who are not involved in product development or user research. In this frame of mind, we had to talk through how we might start to draw conclusions from the data presented to the stakeholders. We all gained more empathy for what it’s like to be a researcher trying to use the graphs in our tools to gain insights.

How Might We

In the beginning, it’s important to be open to all ideas. One way we did this was to phrase questions in the format: “How might we…” At this stage (day two) we weren’t trying to come up with solutions — we were trying to work out what problems there were to solve. ‘We’ is a reminder that this is a team effort, and ‘might’ reminds us that it’s just one suggestion that may or may not work (and that’s OK). These questions then get voted on and moved into a workshop for generating ideas (see Crazy 8s).Read a more detailed instructions on how to run a ‘How might we’ session on the Design Sprint Kit website.

Crazy 8s

This activity is a super quick-fire idea generation technique. The gist of it is that each person gets a piece of paper that has been folded 8 times and has 8 minutes to come up with eight ideas (really rough sketches). When time is up, it’s all pens down and the rest of the team gets to review each other's ideas.In our sprint, we gave each person Post-it notes, paper, and set the timer for 8 minutes. At the end of the activity, we put all the sketches on a wall (this is where the art gallery exercise comes in).

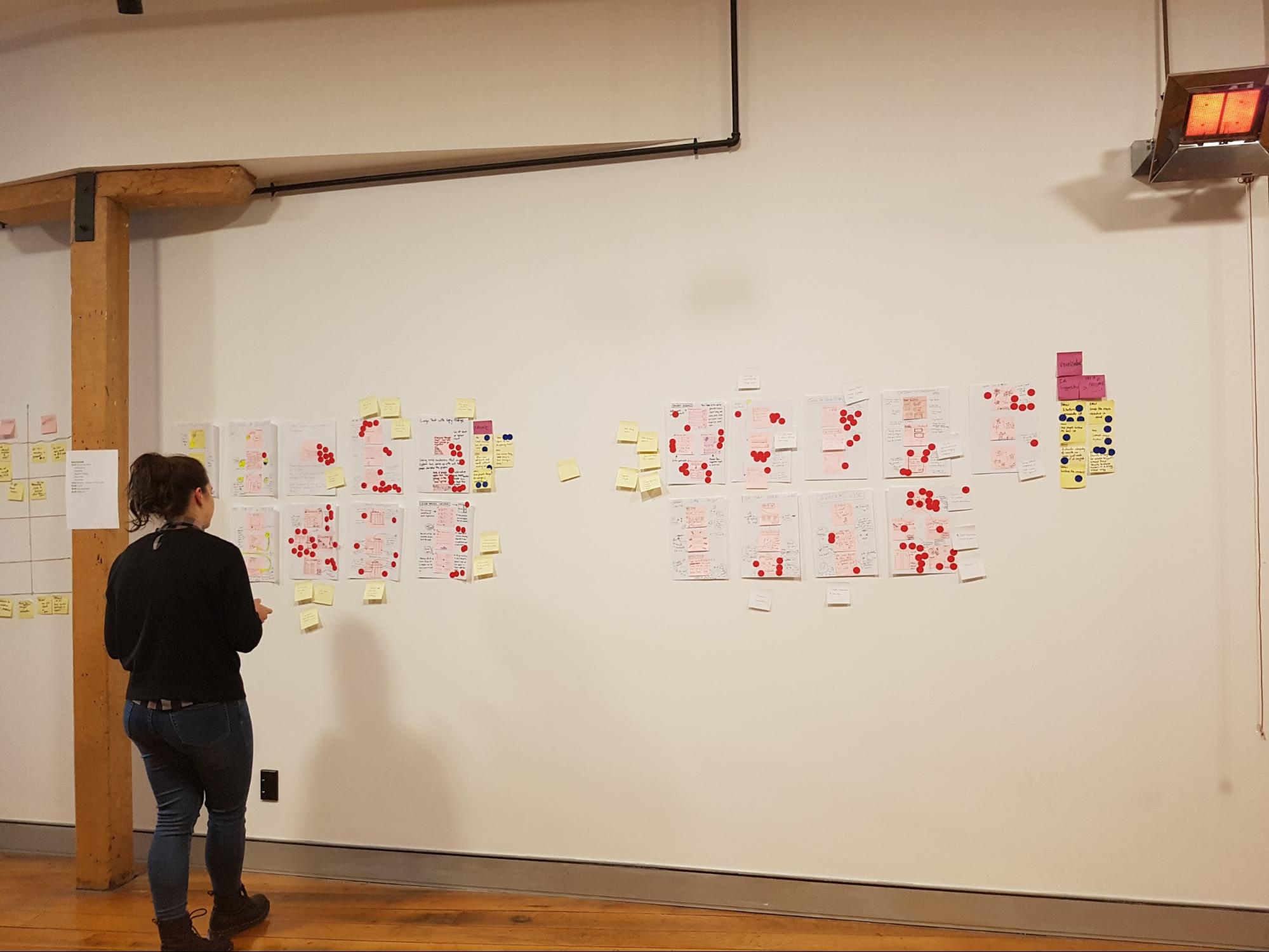

Art gallery/Silent critique

The art gallery is the place where all the sketches go. We give everyone dot stickers so they can vote and pull out key ideas from each sketch. This is done silently, as the ideas should be understood without needing explanation from the person who made them. At the end of it you’ve got a kind of heat map, and you can see the ideas that stand out the most. After this first round of voting, the authors of the sketches get to talk through their ideas, then another round of voting begins.

Usability testing and validation

The key part of a design sprint is validation. For one of our sprints we had two parts of our concept that needed validating. To test one part we conducted simple user tests with other members of Optimal Workshop (the feature was an internal tool). For the second part we needed to validate whether we had the data to continue with this project, so we had our data scientist run some numbers and predictions for us.

Challenges and outcomes

One of our key team members, Rebecca, was working remotely during the sprint. To make things easier for her, we set up 2 cameras: one pointed to the whiteboard, the other was focused on the rest of the sprint team sitting at the table. Next to that, we set up a monitor so we could see Rebecca.

Engaging in workshop activities is a lot harder when working remotely. Rebecca would get around this by completing the activities and take photos to send to us.

Lessons

- Lightning talks are a great way to have each person contribute up front and feel invested in the process.

- Sprints are energy intensive. Make sure you’re in a good place with plenty of fresh air with comfortable chairs and a break out space. We like to split the five days up so that we get a weekend break.

- Give people plenty of notice to clear their schedules. Asking busy people to take five days from their schedule might not go down too well. Make sure they know why you’d like them there and what they should expect from the week. Send them an outline of the agenda. Ideally, have a chat in person and get them excited to be part of it.

- Invite the right people. It’s important that you get the right kind of people from different parts of the company involved in your sprint. The role they play in day-to-day work doesn’t matter too much for this. We’re all mainly using pens and paper and the more types of brains in the room the better. Looking back, what we really needed on our team was a customer support team member. They have the experience and knowledge about our customers that we don’t have.

- Choose the right sprint problem. The project we chose for our first sprint wasn’t really suited for a design sprint. We went in with a well defined problem and a suggested solution from the team instead of having a project that needed fresh ideas. This made the activities like ‘How Might We’ seem very redundant. The challenge we decided to tackle ended up being more of a data prototype (spreadsheets!). We used the week to validate assumptions around how we can better use data and how we can write a script to automate some internal processes. We got the prototype working and tested but due to the nature of the project we will have to run this experiment in the background for a few months before any building happens.

Overall, this design sprint was a great team bonding experience and we felt pleased with what we achieved in such a short amount of time. Naturally, here at Optimal Workshop, we're experimenters at heart and we will keep exploring new ways to work across teams and find a good middle ground.

Further reading

- The Sprint Book - a book by Jake Knapp

- Design Sprint Kit — a whole bunch of presentation decks, and activities for sprints

- Sprint Stories — some case studies that show the processes and value of design sprints

- How to run a remote design sprint without going crazy - an article by Jake Knapp

- Choosing the right sprint problem - a blog by Lisa Jansen