Summary: The equipment and tools you use to run your user testing sessions can make your life a lot easier. Here’s a quick guide.

It’s that time again. You’ve done the initial scoping, development and internal testing, and now you need to take the prototype of your new design and get some qualitative data on how it works and what needs to be improved before release. It’s time for the user testing to begin.

But the prospect of user testing raises an important question, and it’s one that many new user researchers often deliberate over: What gear or equipment should I take with me? Well, never fear. We’re going to break down everything you need to consider in terms of equipment, from video recording through to qualitative note-taking.

Recording: Audio, screens and video

The ability to easily record usability tests and user interviews means that even if you miss something important during a session, you can go back later and see what you’ve missed. There are 3 types of recording to keep in mind when it comes to user research: audio, video and screen recording. Below, we’ve put together a list of how you can capture each. You shouldn’t have to buy any expensive gear – free alternatives and software you can run on your phone and laptop should suffice.

- Audio – Forget dedicated sound recorders; recording apps for smartphones (iOS and Android) allow you to record user interviews and usability tests with ease and upload the recordings to Google Drive or your computer. Good options include Sony’s recording app for Android and the built-in Apple recording app on iOS.

- Transcription – Once you’ve created a recording, you’ll no doubt want a text copy to work with. For this, you’ll need transcription software to take the audio and turn it into text. There are companies that will make transcriptions for you, but software like Transcribe means you can carry out the process yourself.

- Screen recording – Very useful during remote usability tests, screen recording software can show you exactly how participants react to the tasks you set out for them, even if you’re not in the room. OBS Studio is a good option for both Mac and Windows users. You can also use Quicktime (free) if you’re running the test in person.

- Video – Recording your participants as they make their way through the various tasks in a usability test can provide useful reference material at the end of your testing sessions. You can refer back to specific points in a video to capture any detail you may have missed, and you can share video with stakeholders to demonstrate a point. If you don’t have access to a dedicated camera, consider mounting your smartphone on a tripod and recording that way.

Taking (and making use of) notes

Notetaking and qualitative user testing go hand in hand. For most user researchers, notetaking during a research session means busting out the Post-it notes and Sharpie pens, rushing to take down every observation and insight and then having to arduously transcribe these notes after the session – or spend hours in workshops trying to identify themes and patterns. This approach still has merit, as it’s often one of the best ways to get people who aren’t too familiar with user research involved in the process. With physical notes, you can gather people around a whiteboard and discuss what you’re looking at. What’s more, you can get them to engage with the material directly.

But there are digital alternatives. Qualitative notetaking software (like our very own Reframer) means you can bring a laptop into a user interview and take down observations directly in a secure environment. Even better, you can ask someone else to sit in as your notetaker, freeing you up to focus on running the session. Then, once you’ve run your tests, you can use the software for theme and pattern analysis, instead of having to schedule yet another full day workshop.

Scheduling your user tests

Ah, participant scheduling. Perhaps one of the most time-consuming parts of the user testing process. Thankfully, software can drastically reduce the logistical burden.

Here are some useful pieces of software:

Dedicated scheduling tool Calendly is one of the most popular options for participant scheduling in the UX community. It’s really hands-off, in that you basically let the tool know when you’re available, share the Calendly link with your prospective participants, and then they select a time (from your available slots) that works for them. There are also a host of other useful features that make it a popular option for researchers, like integrations and smart timezones.

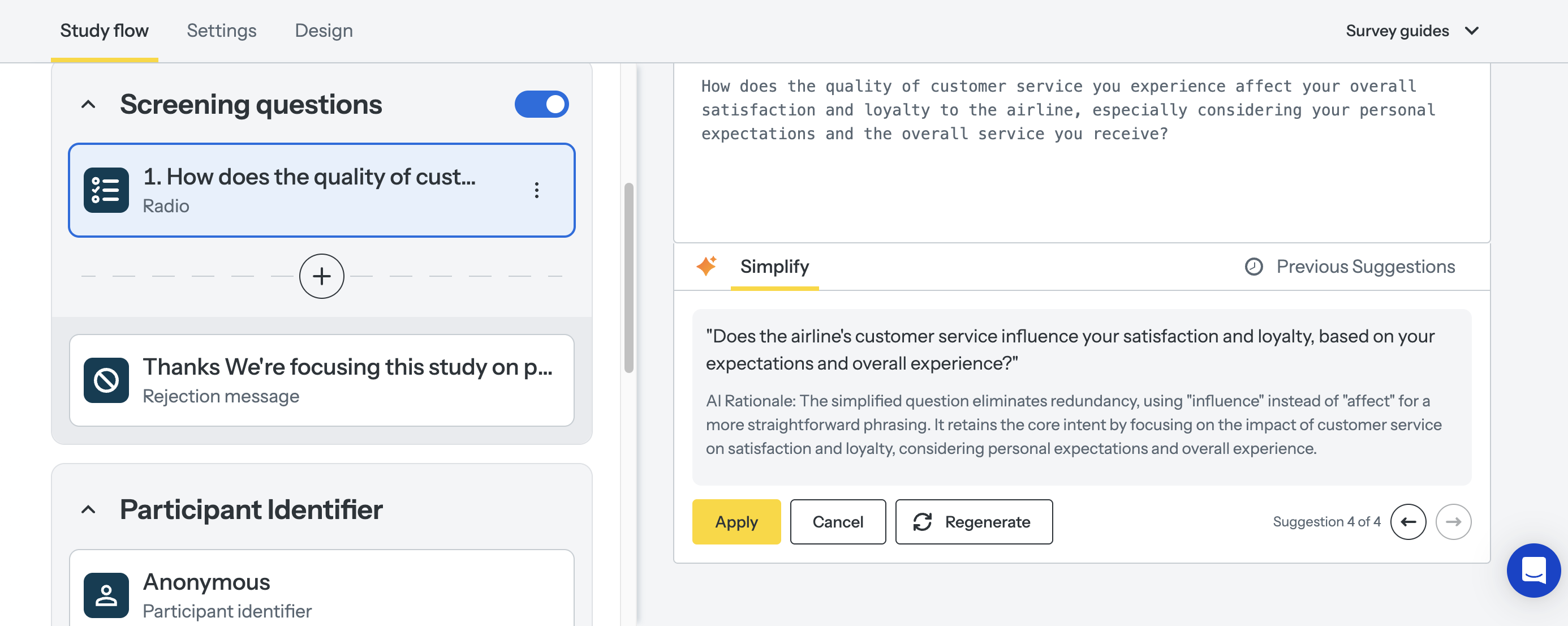

If you’re already using the Optimal Workshop platform, you can use our survey tool Questions as a fairly robust scheduling tool. Simply set up a study and add in prospective time slots. You can then use the multi-choice field option to have people select when they’re available to attend. You can also capture other data and avoid the usual email back and forth.

Storing your findings

One of the biggest challenges for user researchers is effectively storing and cataloging all of the research data that they start to build up. Whether it’s video recordings of usability tests, audio recordings or even transcripts of user interviews, you need to ensure that your data is A) easily accessible after the fact, and B) stored securely to ensure you’re protecting your participants.

Here are some things to ask yourself when you store any piece of customer or user data:

- Who will have access to this data?

- How long do I plan to keep this data?

- Will this data be anonymized?

- If I’m keeping physical data on hand, where will it be stored?

Don’t make the mistake of thinking user data is ‘secure enough’, whether that’s on a company server that anyone can access, or even in an unlocked filing cabinet beneath your desk. Data privacy and security should always be at the top of your list of considerations. We won’t dive into best practices for participant data protection in this article, but instead, just mention that you need to be vigilant. Wherever you end up storing information, make sure you understand who has access.

Wrap up

Hopefully, this guide has given you an overview of some of the tools and software you can use before you start your next user test. We’ve also got a number of other interesting articles that you can read right here on our blog.